What 1,500 Banking Professionals Taught Me About AI Adoption

I recently wrapped up a series of AI training workshops for Bank of China (Hong Kong). The scope: 1,530 participants across 13 countries. The first session alone had 617 people simultaneously online. At that scale, everything I thought I knew about AI training got stress-tested.

Here's what I learned.

The Misconception I Had to Unlearn

Before this engagement, I assumed enterprise AI training was about teaching tools. Show people ChatGPT, demonstrate some prompts, explain Copilot features. Get them comfortable with the interfaces.

I was wrong.

Tool proficiency is table stakes. The real challenge at scale isn't "can they use the tool?" - it's "will they change their behavior?" That's a fundamentally different problem. And solving it required rethinking my entire approach.

Lesson 1: Safety Is the Foundation, Not a Feature

Every AI conversation in banking eventually hits the same wall: data security. "Can I paste this customer data into ChatGPT?" "Is this compliant?" "What if I accidentally leak something?"

I developed what I call the "traffic light protocol" - a simple mental model that categorizes data sensitivity:

- Green: Public information, general knowledge queries. Go ahead.

- Yellow: Internal documents, general business data. Proceed with caution, strip identifiers.

- Red: Customer PII, financial records, strategic data. Full stop.

The framework isn't complicated. That's the point. When you're training 1,500 people across different roles and technical backgrounds, complexity kills adoption. People need a decision framework they can apply instantly without checking documentation.

What surprised me: spending the first 20 minutes of every workshop on safety didn't slow things down. It accelerated them. Participants stopped second-guessing themselves. They knew their boundaries. They experimented more confidently within the green zone.

Lesson 2: Meet People Where They Are

The temptation in AI training is to show what's possible. Look at this image generator. Look at this code interpreter. Look at these advanced agents.

But transformation happens when AI fits into existing workflows, not when it creates new ones.

For the BOCHK team, this meant different approaches for different groups. The training followed a three-tiered structure:

Layer 1: Office Efficiency - How does Copilot integrate with the Excel models you already use? How do you summarize the meeting notes you already take? This isn't flashy, but it's where daily time savings accumulate.

Layer 2: Creative Workflows - For marketing and communications teams, tools like Gamma for presentations and image generation for campaign visuals. Still integrated with existing deliverables, just produced faster.

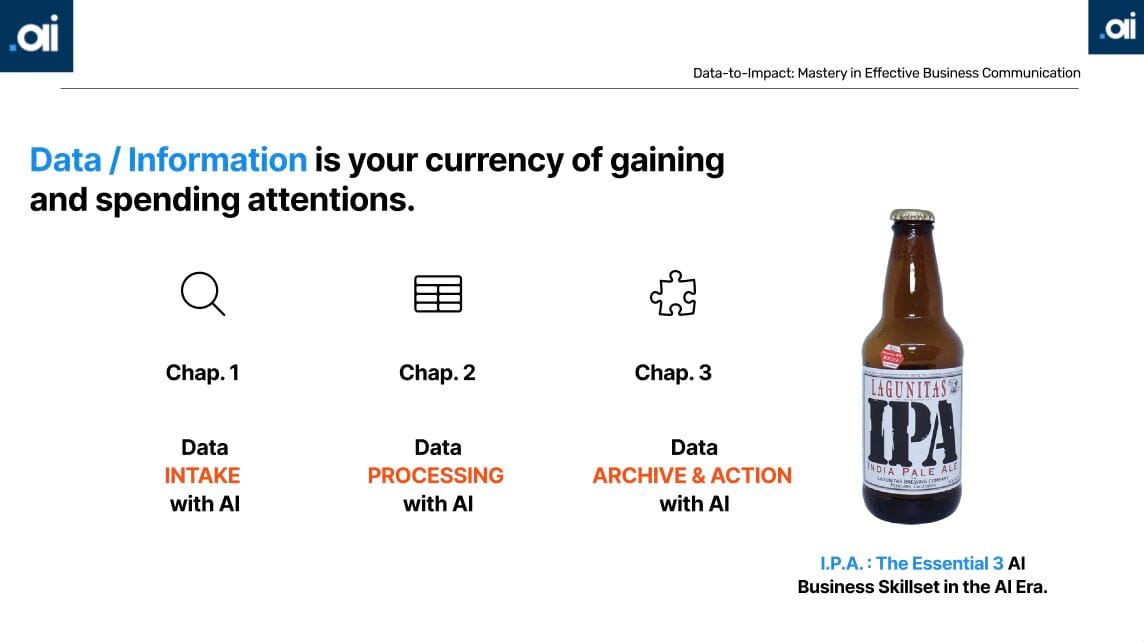

Layer 3: Decision Support - For senior staff, I introduced an IPA framework: Input (what data goes in), Process (how AI analyzes it), Action (what decisions result). This reframed AI from "tool" to "thinking partner" for strategic work.

The key insight: don't ask people to learn new workflows. Show them how AI slots into the workflows they've already mastered.

Lesson 3: The 70/30 Split

Here's a number I keep returning to: 70%.

That's roughly the portion of execution work that AI can handle well - first drafts, data synthesis, research compilation, formatting. The remaining 30% - judgment, context, stakeholder awareness, strategic framing - stays human.

I shared this ratio explicitly in the workshops. It changed the conversation.

Instead of "will AI take my job?" the question became "what's my 30%?" That's a much more productive frame. Participants started identifying their unique value - the institutional knowledge, the relationship context, the judgment calls that no model can replicate.

The teams that embraced the 70/30 split didn't just adopt AI faster. They became more strategic about their own contributions. When you know execution is handled, you focus on the work that actually requires you.

What I Didn't Expect

Scale requires systems, not just content. Delivering the same material across 13 countries means bilingual documentation, timezone flexibility, and consistency that doesn't depend on me being present. I built reusable modules - the "traffic light" framework, the IPA model - that teams could reference after the training ended.

The real feedback loop is behavioral. Satisfaction scores are nice (we hit 9.2/10), but what I watched for was different: were people using these tools a week later? A month later? Were they teaching colleagues? That's the signal that matters.

Starting with safety built trust. Several participants told me afterward: "Finally, AI training that's actually useful." When I asked what made the difference, the answer was usually the same - they felt we addressed their real concerns, not just showed off features.

The Takeaway

Training 1,500 banking professionals didn't teach me much about AI tools. It taught me about organizational change.

People don't resist AI because they don't understand it. They resist because they're uncertain about boundaries, worried about compliance, and skeptical that it fits their actual work. Address those concerns first - with simple frameworks, integrated workflows, and clear human/AI boundaries - and adoption follows.

The technology keeps advancing. The human challenges remain remarkably consistent.

If you're planning enterprise AI adoption and want to discuss what might work for your organization, feel free to connect with me on LinkedIn.